Maturity of the container ecosystem

Synopsis:

The introduction of the software container standard, emerging reduced cost “hybrid cloud” deployments, and competition between differing open source, proprietary, and alternative tool-set makers have created a diverse and thriving ecosystem for cloud-native computing.

Disclaimer:

All contents wherein are the opinions of the author’s alone and carry no express guarantee of veracity nor prudence.

Recap:

History:

Previously, I wrote about the emergence of software containerization with Docker.

In other words, this is a new scheme of distributing and deploying software packaged in “neatly packed, standard containers” that can be run (almost) anywhere.

This saves the need for much of the tiresome, complicated, and (process-wise) unreliable system configuration that used to be needed alongside the deployment of software.

In addition, container orchestration tools such as Kubernetes allow these standard “software in a box” containers to be deployed at a dizzyingly massive scale.

The scale of containerized infrastructure with Kubernetes can also be scaled up or down to meet demand, making it an attractive option for many developers and businesses.

from jpetazzo‘s container.training

I also gave an example of how the business history of containers in global shipping has close parallels to that of containerization in software:

Pioneering businessman Malcolm McLean had the foresight to donate the design for his uniform intermodal shipping containers to the International Standards Organization (ISO) as he sought to make his container shipping fleet of re-purposed WWII ships able to dock and unload at any port worldwide

In 1956, most cargoes were loaded and unloaded by hand by longshoremen. Hand-loading a ship cost $5.86 a ton at that time. Using containers, it cost only 16 cents a ton to load a ship, 36-fold savings

(+)

Relevancy?

Just as McLean’s containers removed the need for labor intensive “loading and unloading” of varied cargo across multiple modes of shipping by longshoremen, Docker containers are attractive because they promise that software will run in the same way on a laptop development environment as a (potentially massively scaled) production deployment.

The software container only has to be built or “packed” one time. Then, it can be run in the same way anywhere.

Docker’s donation of Containerd:

Similiar to McLean’s donation of his container standard to the ISO, the Docker team donated their container runtime containerd as a standard to an emerging organization known as the Cloud Native Computing Foundation (CNCF), born out of the Linux foundation.

The Docker team’s hope was likely that, similar to McLean, they could popularize the container standard gratis in order to reap benefits on their true product: infrastructure for containers. In Docker’s case, this is the set of tools known as Docker Swarm, available in the paid version of Docker Enterprise.

Adoption of Google’s Kubernetes over Docker Swarm for container orchestration:

While this donation may have had its intended effect, much of the wind was already taken out of Docker’s sails by Google’s release of its container orchestration tool Kubernetes (or k8s for short) as free and open source.

Google’s Kubernetes had already gained popularity, maturity, and battle tested-ness that made it an attractive alternative to Docker Swarm for orchestrating container deployments.

And now, a thriving diverse, mature, and open source container ecosystem:

As Google, Microsoft, Lyft, et al. have continued to publish open source code core to the infrastructure of their business, the Cloud Native Computing Foundation has emerged out of tech giants, hardware vendors, and software entrants collaborating alike and building value off of powerful cloud-native open source tools.

Smart companies proliferate complements to their products in order to increase demand.

Therefore, it’s easy to see why so many companies have a vested interest and have banded together to ensure the success of

open source software and organizations like

the Linux Foundation and the CNCF

While the tools listed in the CNCF’s projects are all but guaranteed to be powerful, well supported, and widely adopted, the continued development of the container and cloud landscape has sparked a flurry of new entrants and supporting tools seeking to support and extend the functionality of containerized software and cloud native computing patterns.

This has resulted in an emergent ecosystem of healthy competition between companies, popular tools, and design patterns that strengthens the effectiveness of the landscape as a whole.

Foreword: the importance of a ‘common core’ open source infrastructure and tooling:

Think of the history of data access strategies to come out of Microsoft.

ODBC, RDO, DAO, ADO, OLEDB, now ADO.NET – All New! Are these technological imperatives? The result of an incompetent design group that needs to reinvent data access every goddamn year? (That’s probably it, actually.) But the end result is just cover fire.

The competition has no choice but to spend all their time porting and keeping up, time that they can’t spend writing new features.

Look closely at the software landscape. The companies that do well are the ones who rely least on big companies and don’t have to spend all their cycles catching up and re-implementing and fixing bugs that crop up only on Windows XP. The companies who stumble are the ones who spend too much time reading tea leaves to figure out the future direction of Microsoft.

Given the possibility of a similar situation arising with dominant cloud providers, as well as the business competition considerations, the utility of an open source “common core” for back-end technology infrastructure is a useful consideration.

Intro: an analogy

For the sake of an analogy, let’s say you own a car manufacturer. You manufacture cars, SUV’s, and light trucks at your factory.

For distribution, you’d like to ship your cars worldwide and on various modes of shipping. You want to be sure the cars don’t get dinged up during shipping and the various shipping infrastructure can handle moving them around. You decide on using the standard inter-modal shipping container and its existing shipping ecosystem.

There are three major providers that promise to ship as many cars as you like, but at a hefty price.

(sidenote: uh, where is this website?)

- Aardvark shipping is the dominant shipper in the industry. They have great services, additional options, and make it easy to get going. But, they’re expensive, and they charge an extra fee if you want to transfer over to a different provider or do your own shipping part-way.

- Azul shipping is a decent size, and promises good customer service. They also work with you pretty well if you’d like to do some or most of the shipping yourself.

- Goodboy shipping pioneered some cool things with large-scale shipping, and have some other services that are really high-IQ stuff. They’re still a bit expensive though.

Your secret however? You have a fondness for Cuban cigars, caviar, and fine dining. You decide that you’d like to cut out the middle man, and ship the containers yourself if you can.

You find some like-minded car manufactures, and go over what you’ll need to get started:

- Somewhere to put the design of your car containers once they’re ready to be shipped

- Some kind of infrastructure for orchestrating the shipment of containers to customers

- Some kind of system to monitor the containers

- Some kind of system to have your cars to be discoverable, and for them to communicate outside of their container during shipping (this part of the analogy is tortured).

- Something that can coordinate standard types of shipments that you or other car manufactures are likely to perform

- Others!

You also figure you could save money and have further flexibility with your shipping by doing a ‘hybrid approach‘. You can do most of the shipping yourself, but if you have a surge of demand you can scale out to the bigger providers.

Resources:

By no means are the following documents or items detailed comprehensive nor necessarily the best technical solutions from a design standpoint. As container technologies emerge, there may very well be a lead-in time period where multiple competing platform/toolsets persist. Technical decisions of this nature should be undertaken carefully.

Regardless, it is likely that there is room for multiple standards in each tool-space to suit the needs of particular use cases.

1. The open source container repository:

What good is a fast car if you’ve got no where to go with it?

Or (better), what good are your home-grown software containers if you’ve got no way to distribute them across your systems?

Option A: Harbor

Harbor is a container image and helm chart repository currently incubating in the cloud native computing foundation (CNCF). It can be installed with the some of the same container tools it seeks to support, and has multiple nifty features. From a passing glance, it seems to be the more popular of the two options presented here currently.

Option B: Quay

Red Hat Quay is a container image repository that is part of Red Hat‘s all-encompassing hybrid cloud/Kubernetes openshift platform. While probably not as popular as Harbor, it may have more support and easier integration with other components of openshift due to (the now substantially sized) Red Hat’s backing.

2. Container orchestration

Winner: Kubernetes

Google developed Kubernetes for managing large numbers of containers. Instead of assigning each container to a host machine, Kubernetes groups containers into pods. For instance, a multi-tier application, with a database in one container and the application logic in another container, can be grouped into a single pod. The administrator only needs to move a single pod from one compute resource to another, rather than worrying about dozens of individual containers.

Google itself has made the process even easier on its own Google Cloud Service. It offers a production-ready version of Kubernetes called the Google Container Engine.

–The New Stack‘s “The Docker & Container Ecosystem” pg. 47

Hashicorp Nomad (+)

you’ve probably used, including Vagrant and Terraform

Kubernetes is the 800-pound gorilla of container orchestration.

It powers some of the biggest deployments worldwide, but it comes with a price tag.Especially for smaller teams, it can be time-consuming to maintain and has a steep learning curve. For what our team of four wanted to achieve at trivago, it added too much overhead. So we looked into alternatives — and fell in love with Nomad.

…

On top of that, the Kubernetes ecosystem is still rapidly evolving. It takes a fair amount of time and energy to stay up-to-date with the best practices and latest tooling. Kubectl, minikube, kubeadm, helm, tiller, kops, oc – the list goes on and on. Not all tools are necessary to get started with Kubernetes, but it’s hard to know which ones are, so you have to be at least aware of them. Because of that, the learning curve is quite steep.

…

Batteries not included

Nomad is the 20% of service orchestration that gets you 80% of the way. All it does is manage deployments. It takes care of your rollouts and restarts your containers in case of errors, and that’s about it.

The entire point of Nomad is that it does less: it doesn’t include fine-grained rights management or advanced network policies, and that’s by design. Those components are provided as enterprise services, by a third-party, or not at all.

I think Nomad hit a sweet-spot between ease of use and expressiveness. It’s good for small, mostly independent services. If you need more control, you’ll have to build it yourself or use a different approach. Nomad is just an orchestrator.

The best part about Nomad is that it’s easy to replace. There is little to no vendor lock-in because the functionality it provides can easily be integrated into any other system that manages services. It just runs as a plain old single binary on every machine in your cluster; that’s it!

Matthias Endler — maybe you don’t need kubernetes (+)

Apache Mesos (+)

Apache Mesos is a cluster manager that can help the administrator

schedule workloads on a cluster of servers. Mesos excels at handling very large workloads, such as an implementation of the Spark or Hadoop data processing platforms.Mesos had its own container image format and runtime built similarly to Docker. The project started by building the orchestration first, with the container being the side effect of needing something to actually package and contain an application. Applications were packaged in this format to be able to be run by Mesos…

Prominent users of Mesos include Twitter, Airbnb, Netflix, Paypal, SquareSpace, Uber, and more.

–The New Stack‘s “The Docker & Container Ecosystem” pg. 50

Docker Swarm (+)

Docker Swarm is a clustering and scheduling tool that automatically optimizes a distributed application’s infrastructure based on the

application’s lifecycle stage, container usage and performance needs.Swarm has multiple models for determining scheduling, including understanding how specific containers will have specific resource requirements. Working with a scheduling algorithm, Swarm determines which engine and host it should be running on. The core aspect of Swarm is that as you go to multi-host, distributed applications, the developer wants to maintain the experience and portability. For example, it needs the ability to use a specific cluster solution for an application you are working with. This would ensure cluster capabilities are portable all the way from the laptop to the production environment.

–The New Stack‘s “The Docker & Container Ecosystem” pg. 50

3. Monitoring

Prometheus (+)

Prometheus is an open-source systems monitoring and alerting toolkit originally built at SoundCloud.Prometheus does one thing and it does it well. It has a simple yet powerful data model and a query language that lets you analyse how your applications and infrastructure are performing. It does not try to solve problems outside of the metrics space, leaving those to other more appropriate tools.Prometheus is primarily written in Go and licensed under the Apache 2.0 license.

-From Tikam02‘s DevOps Guide

Graphana (+)

Grafana provides a powerful and elegant way to create, explore, and share dashboards and data with your team and the world.

Grafana is most commonly used for visualizing time series data for Internet infrastructure and application analytics but many use it in other domains including industrial sensors, home automation, weather, and process control.

Grafana works with Graphite, Elasticsearch, Cloudwatch, Prometheus, InfluxDB & More.

Grafana features pluggable panels and data sources allowing easy extensibility and a variety of panels, including fully featured graph panels with rich visualization options. There is built in support for many of the most popular time series data sources.

Other Monitoring

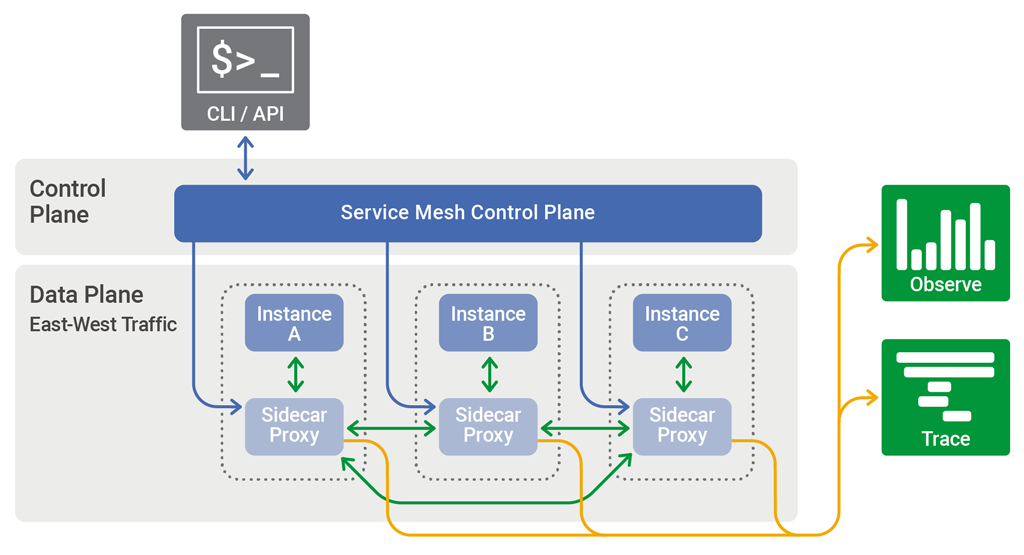

4. Service Meshes (+):

What is a Service Mesh?

Istio (+)

What is Istio?

Istio is an open source service mesh initially developed by Google, IBM and Lyft. The project was announced in May 2017, with its 1.0 version released in July 2018. Istio is built on top of the Envoy proxy which acts as its data plane. Although it is quite clearly the most popular service mesh available today, it is for all practical purposes only usable with Kubernetes.

–glasnostic.com

United States Department of Defense is betting on Kubernetes and Istio:

As hybrid cloud strategies go, the U.S. military certainly is taking a unique approach.

Just like almost everything else, military organizations increasingly depend on software, and they are turning to an array of open source cloud tools like Kubernetes and Istio to get the job done, according to a presentation delivered by Nicholas Chaillan, chief software officer for the U.S. Air Force, at KubeCon 2019 in San Diego. Those tools have to be deployed in some very interesting places, from weapons systems to even fighter planes. Yes, F-16s are running Kubernetes on the legacy hardware built into those jets.

…

Chaillan and his team decided to embrace open source software as the foundation of the new development platform, which they called the DoD Enterprise DevSecOps Initiative. This initiative specified a combination of Kubernetes, Istio, knative and an internally developed specification for “hardening” containers with a strict set of security requirements as the default software development platform across the military.

…

The scale at which the DoD operates is unlike almost all commercial operations; Chaillan had to train 100,000 people on the principles of DevSecOps, not to mention the new tools.

-The New Stack’s “How the U.S. Air Force Deployed Kubernetes and Istio on an F-16 in 45 days“

Linkerd (+)

Linkerd (rhymes with “chickadee”) is the original service mesh created by Buoyant, which coined the term in 2016. It is the official service mesh project supported by the Cloud-Native Computing Foundation, Like Twitter’s Finagle, on which it was based, Linkerd was originally written in Scala and designed to be deployed on a per-host basis.

Criticisms of its comparatively large memory footprint subsequently led to the development of Conduit, a lightweight service mesh specifically for Kubernetes, written in Rust and Go.

The Conduit project has since been folded into Linkerd, which relaunched as Linkerd 2.0 in July of 2018.

While Linkerd 2.x is currently specific to Kubernetes, Linkerd 1.x can be deployed on a per-node basis, thus making it a more flexible choice where a variety of environments need to be supported.

-glassnostic.com “comparing service meshes linkerd vs istio”

5. Installing and Managing Containerized Applications

Helm (+)

Helm helps you manage Kubernetes applications — Helm Charts help you define, install, and upgrade even the most complex Kubernetes application.

Charts are easy to create, version, share, and publish — so start using Helm and stop the copy-and-paste.

The latest version of Helm is maintained by the CNCF – in collaboration with Microsoft, Google, Bitnami and the Helm contributor community.

6. Others

Kubernauts The Golden Kubernetes Tooling and Helpers List

Collabnix Kubetools (Curated List of Kubernetes Tools)

crn.com/slide-shows/cloud/the-10-hottest-kubernetes-tools-and-technologies-of-2019/

Other Junk: github.com/mkrupczak3?tab=repositories

DevOps Guide:

Travis Travis Concepts Travis Commands |

GitHub Actions Actions Concepts Actions Tutorial |

- What is Devops - AWS

- DevOps Roadmap by kamranahmedse

- Devops Roadmap by Nguyen Truong Duong

- Roadmap To devops

- r/devops

- IBM Kubernetes Handson Labs

- Getting Started With Azure DevOps

- Getting started with Google Cloud Platform

- Freecodecamp Devops Getting Started Articles

- The-devops-roadmap-for-programmers

- DevOps Getting Started

- How-to-get-started-with-devops

- Going-from-it-to-devops

- Add More Notes on OS/Linux

- Add more concepts of CI/CD

- Add more interview Question about OS and Networking

- Kubernetes Monitoring - Prometheus and Grafana

- Add IaC concepts and Tools

- Add - AWS CloudFormation,Terraform,Chef,Ansible,Puppet

- Helm - charts

Thanks goes to these wonderful people (emoji key):

This project follows the all-contributors specification. Contributions of any kind welcome!

This project is licensed under the MIT License - Copyright (c) 2019 Tikam Alma

Thanks for reading!

![]()